In the burgeoning field of voice technology, optimizing Voice User Experience (VUX) is a sophisticated endeavor fraught with unique challenges. VUX designers confront intricate variables from timing nuances and intonation accuracy to the unpredictability of human speech and environmental interference.

These factors often present hurdles that can transform an otherwise fluid dialogue into a disjointed exchange. For enterprises aiming to scale and perfect their voice-enabled services, these are not mere technicalities but critical pivots on the customer experience journey.

Recognizing this complex landscape, at Cognigy, we’re excited to introduce the ultimate tool for developers and VUX designers in their quest for voice excellence: Call Tracing.

Visualizing the Unseen: How Call Tracing Changes the Game

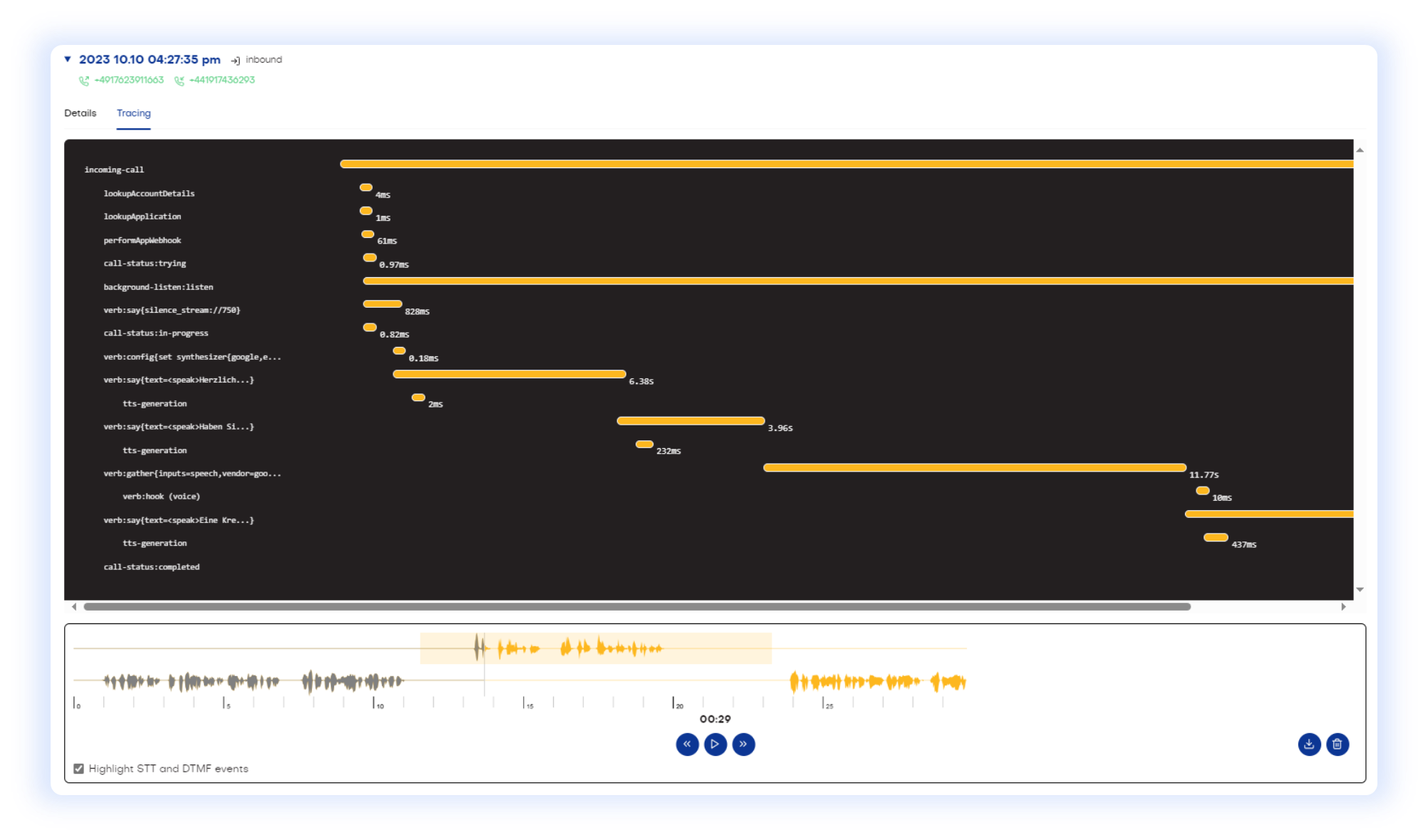

Traditionally, understanding and monitoring voice interaction requires painstaking analysis of transcriptions, user feedback, and basic audio playback. Now, Cognigy’s Call Tracing feature offers an actionable visualization of all activities taking place during the call, such as speech recognition and connections, together with the call recording waveforms. This powerful tool grants VUX designers an unparalleled view of the dynamics of voice interactions.

Event and soundwave visualization provides an analytical perspective that goes beyond the audio, giving professionals the ability to dissect and understand voice experiences at a granular level. It brings critical elements to the forefront that might not be immediately evident in transcriptions or basic audio playback.

Applying Valuable Insights to Enhance Voice Interactions

1. Optimizing Response Timings

Audio soundwaves allow you to visually identify parts of the conversation where silence and pauses occur. Extended periods of silence indicate undesirable delays that hinder a seamless user experience.

The call activity report offers a fine-grained analysis of the duration of each call activity, allowing you to identify root causes and troubleshoot effectively. For example, by highlighting STT and DTMF events in the audio soundwaves, you may discover that latency in speech recognition is a major contributor. Taking a step further, you can also leverage Call Tracing to test and compare the performance of different speech services.

2. Detecting and Resolving Overlapping Speech

Analyzing where the user’s speech starts and ends, as well as identifying overlapping speech between the AI Agent and the user, is another crucial aspect of voice conversation design. Such overlaps disrupt the dialogue flow and cause the AI Agent to miss crucial information in the user’s commands or queries.

This could happen when the AI Agent misinterprets the user’s pauses as the end of utterances. By reviewing these overlaps, you can fine-tune settings like Continuous Automated Speech Recognition (ASR) to ensure seamless voice interaction.

3. Monitoring Speech Quality

Call Tracing additionally reveals inconsistencies in the voice agent’s speech at a glance, such as low volume and unexpected drops in soundwaves. Recognizing these patterns allows you to maintain service quality by timely troubleshooting, thereby improving the overall customer experience.

4. Fine-tuning the ASR Model

An advanced use case of Call Tracing involves combining it with Cognigy Insights data to optimize (custom) speech recognition models. More specifically, you can delve into conversations where the STT service fails to accurately transcribe the user utterances, leading to misunderstandings by the AI Agent.

By reviewing the audio recordings and their waveform, you can detect whether the issue lies in the utterance’s speed, volume, accent, specific vocabulary, or other factors. Armed with this insight, you can fine-tune the speech model to maximize recognition accuracy.

Conclusion

In a nutshell, Cognigy’s Call Tracing is akin to a magnifying glass, highlighting not just what is being said, but the crucial subtleties of how it is expressed, offering a wide spectrum of insights that play into the user experiences. The elements, often lost or unrecognizable in traditional analysis methods, can make a world of difference in how a voice AI Agent interprets and responds to user utterances.

Visit our documentation for more information about the Voice Gateway Self-Service Portal and Call Tracing.

.png?width=60&height=60&name=AI%20Copilot%20logo%20(mega%20menu).png)