The 2026.7 release delivers meaningful upgrades across contact center integration, AI Agent testing, and model selection. Here's a close look at what's new.

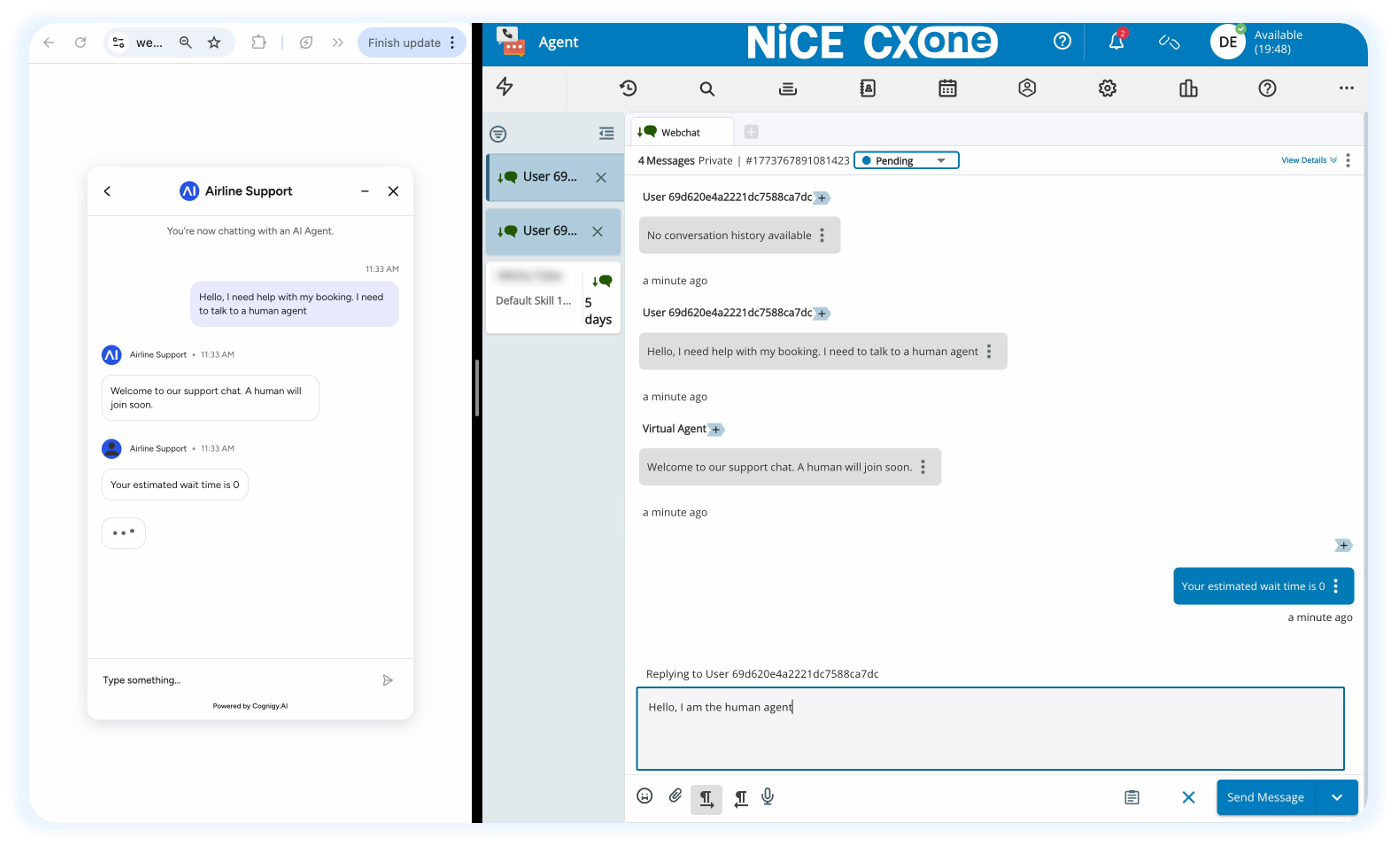

NiCE CXone Handover Provider (Beta)

We’ve introduced a native Handover Provider for NiCE CXone, providing a streamlined, out-of-the-box option for human escalation to one of the most widely used contact center platforms in the enterprise market.

Why It Matters

A standout aspect of this integration is the lightweight setup on the Cognigy side. Rather than manually mapping queues, routing rules, or agent team settings, the Handover Provider automatically picks up the relevant configuration from NiCE CXone.

In practice, setup in Cognigy comes down to creating the Handover Provider under Deploy > Handover Providers, naming it, and attaching it to a Handover to Human Agent Node in your Flow. All routing logic is managed natively within NiCE CXone's Omnichannel Studio, where a Cognigy Handover Script is automatically provisioned and keyed to your provider ID.

If your organization runs on NiCE CXone, this integration brings handover configuration into the standard AI Agent deployment workflow with minimal overhead.

Note: This Handover Provider is only activated for NiCE CXone users.

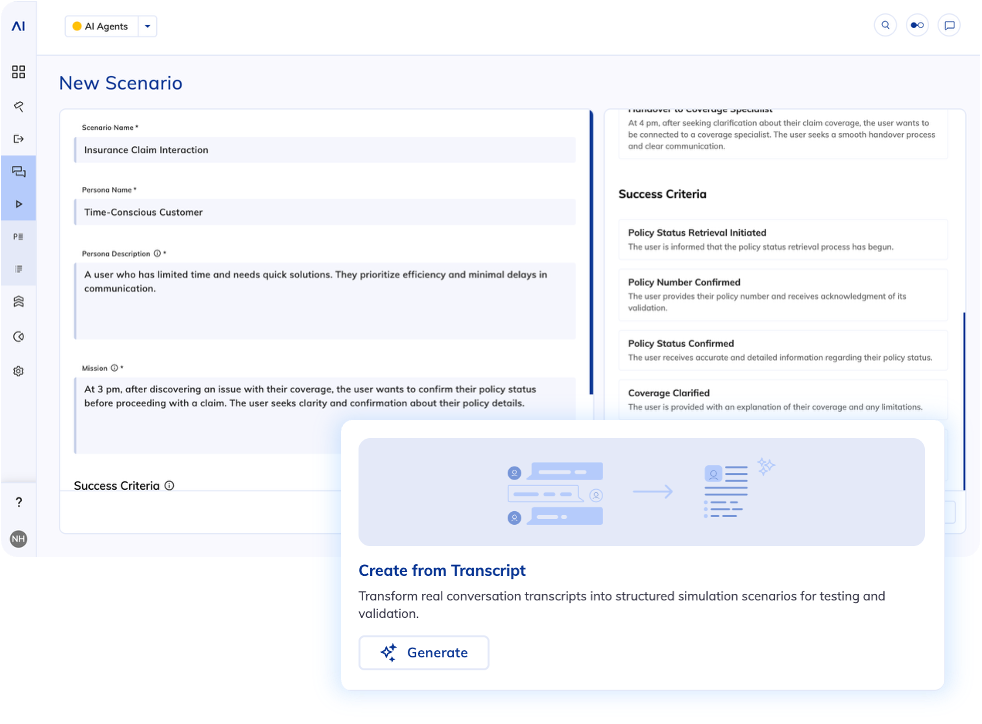

Generate Scenarios from Uploaded Transcripts in the Simulator

Automated scenario generation in the Simulator removes the overhead of manually scripting test cases, whether based on existing AI Agent Jobs or individual transcripts from Cognigy Insights. With the latest release, a third powerful option is now available: generating scenarios directly from uploaded transcripts.

Why It Matters

While Insights transcripts capture real interactions between customers and AI Agents, this new capability expands your testing scope by allowing you to simulate conversations based on previous human-driven interactions. This is especially powerful when building and validating new automation use cases, where no prior AI Agent data exists yet.

By uploading historical transcripts, you can quickly recreate real customer support scenarios and test how your AI Agent would handle them from day one. This enables teams to identify gaps, validate intent coverage, and refine automation strategies early, before going live.

Transforming human-to-human conversations into structured, reusable scenarios for AI Agent evaluation expedites QA processes while leading to more reliable and effective automation.

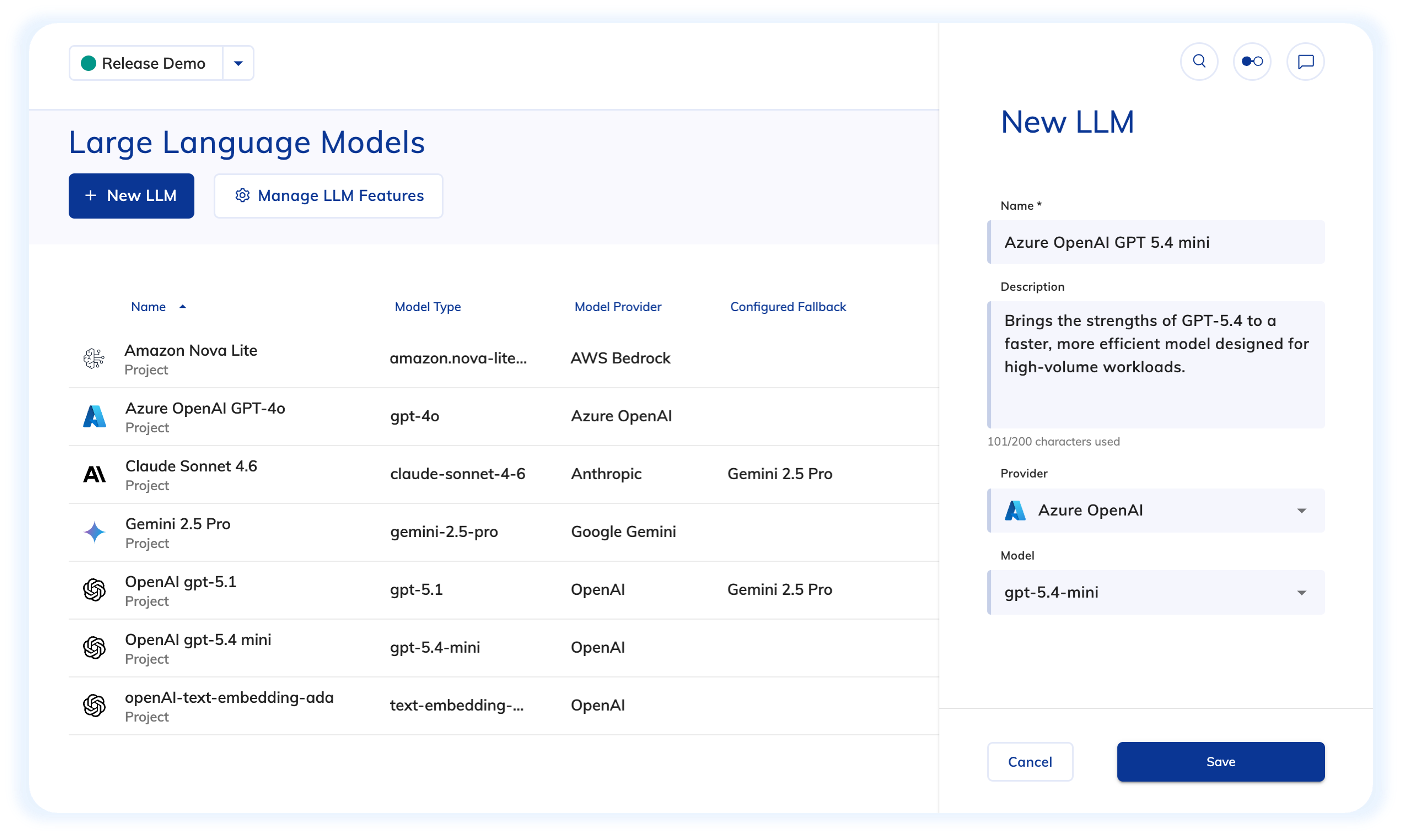

Native Support for the GPT-5.4 Family

Following gpt-5-1 and claude-sonnet-4-6, we’ve added support for three new models from the GPT-5.4 family: gpt-5.4, gpt-5.4-mini, and gpt-5.4-nano, available for both OpenAI and Microsoft Azure OpenAI connections. These models are available immediately through the standard LLM provider configuration in Cognigy.

Why It Matters

The three variants follow a familiar pattern: gpt-5.4 targets maximum performance use cases, while gpt-5.4-mini and gpt-5.4-nano offer progressively lighter and more cost-efficient options. For teams running high-volume contact center deployments, the ability to match model selection to task complexity can have a meaningful impact on both cost and latency.

At the same time, native support for the latest GPT-5.4 models gives teams greater flexibility to adopt and experiment with cutting-edge capabilities as they become available.

See the full list of supported LLM vendors and models here.

Other Improvements

Cognigy.AI

- Updated

_.merge()in Code Nodes so the code editor suggestions now match Lodash’s behavior when you combine objects - Added the capability to select a time for Knowledge Connector sync

- Improved OAuth2 handling for Azure OpenAI LLM connections. Custom headers are now sent with both the LLM request and the OAuth2 authentication request

Cognigy Live Agent

- Added support for Microsoft 365 OAuth SMTP

Cognigy Insights

- Fixed the

$filterbehavior for the Sessions collection in the Cognigy.AI OData endpoint when values in thegoalsfield were stored astext[]. Now, filters using string functions, such ascontains,startswith, andendswith, work correctly

For further information, check out our complete Release Notes here.

.png?width=60&height=60&name=AI%20Copilot%20logo%20(mega%20menu).png)